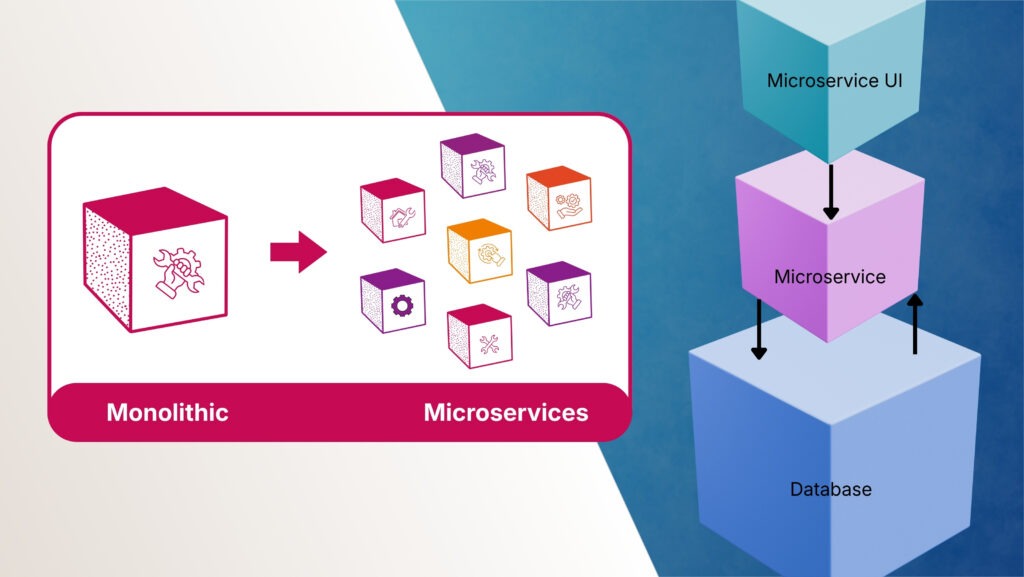

Slow Step-by Step Execution |

Each workflow step waited for the previous one to finish before starting — like a single queue where everyone waits in line, making the whole system slow. |

Asynchronous Communication — Services communicate through messages without waiting, so tasks run independently without blocking each other. |

No Real Parallel Processing |

When a workflow needed to do

multiple things at once, the system

still did them one by one — losing the benefit of parallel execution. |

Independent Service Execution — Each service runs as a separate process, so multiple tasks execute truly in parallel across different services. |

|

|

All data requests went through one

single layer, creating a bottleneck —

like having only one cashier for

thousands of customers. |

Database per Service — Each service owns its own database, so data operations are distributed and don’t create a single bottleneck. |

|

|

When something broke, finding the exact cause was like finding a needle in a haystack — everything was mixed together with no clear separation. |

Centralized Logging & Monitoring — All services send logs to one place with unique trace IDs, making it easy to follow a request across services and find the exact error. |

Unreliable Data Consistency |

Keeping data accurate across long, multi-step workflows was fragile — if one step failed midway, the entire process could end up in a broken state. |

Eventual Consistency — Each service handles its own data transaction locally, and services coordinate through events to keep data in sync across the system. |

|

|

As workflows got more complex, the system became visibly slower — users experienced lag, long loading times, and unresponsive screens. |

Horizontal Scaling — Busy services automatically get more instances to handle the load, so users always get fast responses. |

Limited Security & Login System |

The login system couldn’t properly

handle multiple organizations, user

roles, or modern security standards

like OAuth 2.0.

|

Dedicated Authentication Service — A separate service handles all login, user roles, and security — making it easy to support multiple organizations and modern security standards. |

Cannot Scale

Independently |

To handle more load on one feature, the entire application had to be scaled — wasting resources on parts that didn’t need it. |

Independent Scalability — Each service scales on its own based on its specific load — only the busy parts get more resources, saving cost. |

|

|

If any part of the system crashed, the entire platform went down — one bug could take everything offline.

|

Fault Isolation — Services are isolated from each other, so if one service crashes, the rest continue working normally. |

|

|

Every small change required

redeploying the entire application —

making releases slow, risky, and

infrequent. |

Independent Deployment — Each service is built, tested, and deployed separately — teams can release updates faster without affecting other services. |